A Quantinuum-led team has built the quantum programming tools for real-time magic state distillation on a quantum computer

Researchers at Quantinuum and Microsoft’s Azure Quantum used the Quantum Intermediate Representation (QIR) to demonstrate a magic state distillation protocol for the first time on quantum hardware – a key element necessary for universal, fault-tolerant quantum computing

Building a quantum computer that offers advantages over classical computers is the goal of quantum computing groups worldwide. A competitive quantum computer must be “universal”, requiring the ability to perform all operations already possible on a classical computer, as well as new ones specific to quantum computing. Of course, that’s just the beginning – it should also be able to do this in a reasonable amount of time, to deal effectively with noise from the environment, and to perform computations to arbitrary accuracy.

This is a lot to get right, and over the years quantum computer scientists have described ways to solve these often-overlapping challenges. To deal with noise from the environment and achieve arbitrary accuracy, quantum computers need to be able to keep going even as noise accumulates on the quantum bits, or qubits, which hold the quantum information. Such fault-tolerance may be achieved using quantum error correction, where ensembles of physical qubits are encoded into logical qubits and those are used to counteract noise and perform computational operations called gates. Unfortunately, no single quantum error correction code plays well with the goal of universality because all codes lack a complete universal set of fault-tolerant gates (the technical reason for this comes down to the way quantum gates are executed between logical qubits – the native gate set on error-corrected logical qubits are known by experts as transversal gates, and they do not include all the gates needed for universal quantum computing).

The solution to this obstacle to universality is a magic state, a quantum state which provides for the missing gate when error correcting codes are used. High fidelity magic states are achieved by a process of distillation, which purifies them from other noisier magic states. It is widely recognized that magic state distillation is one of the totemic challenges on the path towards universal, fault-tolerant quantum computing. Quantinuum’s scientists, in close collaboration with a team at Microsoft, set out to demonstrate the distillation process in real-time using physical qubits on a quantum computer for the first time.

The results of this work are available in a new paper, Advances in compilation for quantum hardware -- A demonstration of magic state distillation and repeat until success protocols.

Magic state distillation

How does magic state distillation work? Imagine a factory, taking in many qubits in imperfect initial states at one end. Broadly speaking, the factory distills the imperfect states into an almost pure state with a smaller error probability, by sending them through a well-defined process over and over. In this case, the process takes in a group of five qubits. It applies a quantum error correcting code that entangles these five qubits, with four used to test whether the fifth, target qubit has been purified. If the process fails, the ensemble is discarded and the process repeats. If it succeeds, the newly distilled target qubit is kept and combined with four other successes to form a new ensemble, which then rejoins the process of continued purification. By undertaking this process many times, the purity of the magic state increases at each step, gradually moving towards the conditions required for universal, fault-tolerant quantum computing.

Despite being the subject of theoretical exploration over decades, real-time magic state distillation had never been realized on a quantum computer. In typical pioneering style, the Quantinuum and Microsoft team decided to take on this challenge. But before they could get started, they recognized that their toolset would have to be significantly sharpened up.

Creating new tools for quantum programming

At the heart of magic state distillation is a highly complex repeating process, which requires state-of-the-art protocols and control flow logic built on a best-in-class programming toolset. The research team turned to Quantum Intermediate Representation (QIR) to simplify and streamline the programming of this complex quantum computing process.

QIR is a is a quantum-specific code representation based on the popular open-sourced classical LLVM intermediate language, with the addition of structures and protocols that support the maturation and modernization of quantum computing. QIR includes elements that are essential in classical computing, but which are yet to be standardized in quantum computing, such as the humble programming loop.

Loops, which often take forms like "for...next" or "do...while," are central to programming, allowing code to repeat instructions in a stepwise manner until a condition is met. In quantum computing, this is a tough challenge because loops require control flow logic and mid-circuit measurement, which are difficult to realize in a quantum computer but have been demonstrated in Quantinuum’s System Model H1-1, Powered by Honeywell. Loops are essential for realizing magic state distillation and it’s well-understood that LLVM is great at optimizing complex control flow, including loops. This made magic state distillation a natural choice for demonstrating a valuable application of QIR and making for a great example of the use of a classical technique in a quantum context.

Result: demonstrating a magic state distillation protocol

The team used Quantinuum’s H1-1 quantum computer – benefiting from industry-leading components such as mid-circuit measurement, qubit reuse and feed-forward – to make possible the quantum looping required for a magic state distillation protocol, and becoming the first quantum computing team ever to run a real-time magic state distillation protocol on quantum hardware.

Four ways to achieve a quantum computer programmable loop

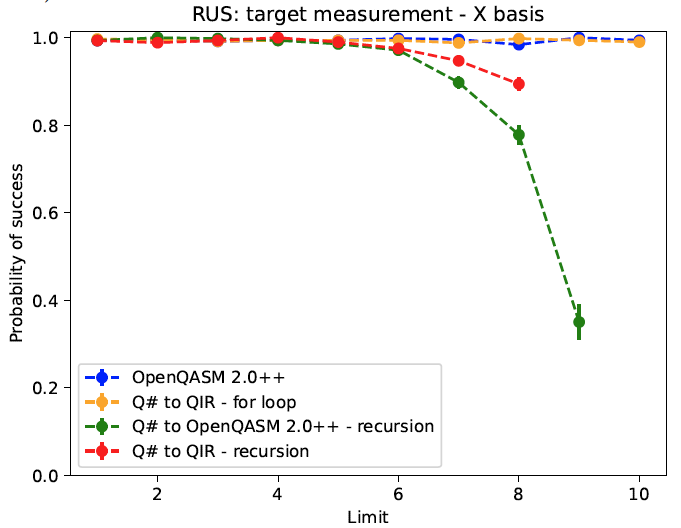

Building on this success, the team designed further experiments to assess the potential of four methods for exploring the use of a quantum protocol called a repeat-until-success (RUS) circuit to achieve a loop process. First, they hard-coded a loop directly into the extended OpenQASM 2.0, a widely used quantum assembly language, but which requires additional overhead to target advanced components on Quantinuum's very versatile H-Series quantum computer. Against this, they compared two alternative methods for coding a loop in a standard high-level programming language: controlled recursion, which was directed through both OpenQASM and through QIR; and a native for loop made possible within QIR.

The results were clear-cut: the hard-coded OpenQASM 2.0 loop performed as well as the theoretical prediction, maintaining high quality results after a number of loops, as did the natively-coded QIR for loop. The two recursive loops saw the quality of their results drop away fast as the loop limit was raised. But in a head-to-head between hard-coded OpenQASM and QIR, which converts high-level source code from many prominent and familiar languages into low-level machine code, QIR won hands-down on the basis of practicality.

Martin Roetteler, Director of Quantum Applications at Microsoft, shared: “This was a very exciting exploration of control flow logic on quantum hardware. In seeking to understand the capabilities of QIR to optimize programming structures on real hardware, we were rewarded with a clear answer, and an important demonstration of the capabilities of QIR.”

H2’s 32 qubits will power the next phase

In follow-up work, the team is now preparing to run a logical magic state protocol on the H2-1 quantum computer with its 32 high-fidelity qubits, and hopes to become the first group to successfully achieve logical magic state distillation. The features and fidelity offered by the H2 make it one of the best quantum computers currently capable of shooting for such a major milestone on the journey towards fault tolerance, while the current work demonstrates that, in QIR, the necessary control flow logic is now available to achieve it.

The paper discussed in this post was authored by Natalie C. Brown, John P. Campora III, Cassandra Granade, Bettina Heim, Stefan Wernli, Ciaran Ryan-Anderson, Dominic Lucchetti, Adam Paetznick, Martin Roetteler, Krysta Svore and Alex Chernoguzov.

About Quantinuum

Quantinuum, the world’s largest integrated quantum company, pioneers powerful quantum computers and advanced software solutions. Quantinuum’s technology drives breakthroughs in materials discovery, cybersecurity, and next-gen quantum AI. With over 500 employees, including 370+ scientists and engineers, Quantinuum leads the quantum computing revolution across continents.

This month, Quantinuum welcomed its global user community to the first-ever Q-Net Connect, an annual forum designed to spark collaboration, share insights, and accelerate innovation across our full-stack quantum computing platforms. Over two days, users came together not only to learn from one another, but to build the relationships and momentum that we believe will help define the next chapter of quantum computing.

Q-Net Connect 2026 drew over 170 attendees from around the world to Denver, Colorado, including representatives from commercial enterprises and startups, academia and research institutions, and the public sector and non-profits - all users of Quantinuum systems.

The program was packed with inspiring keynotes, technical tracks, and customer presentations. Attendees heard from leaders at Quantinuum, as well as our partners at NVIDIA, JPMorganChase and BlueQubit; professors from the University of New Mexico, the University of Nottingham and Harvard University; national labs, including NIST, Oak Ridge National Laboratory, Sandia National Laboratories and Los Alamos National Laboratory; and other distinguished guests from across the global quantum ecosystem.

Congratulations to Q-Net Connect 2026 Award Recipients!

The mission of the Quantinuum Q-Net user community is to create a space for shared learning, collaboration and connection for those who adopt Quantinuum’s hardware, software and middleware platform. At this year’s Q-Net Connect, we awarded four organizations who made notable efforts to champion this effort.

- JPMorganChase received the ‘Guppy Adopter Award’ for their exemplary adoption of our quantum programming language, Guppy, in their research workflows.

- Phasecraft, a UK and US-based quantum algorithms startup, received the ‘Rising Star’ award for demonstrating exceptional early impact and advancing science using Quantinuum hardware, which they published in a December 2025 paper.

- Qedma, a quantum software startup, received the ‘Startup Partner Engagement’ award for their sustained engagement with Quantinuum platforms dating back to our first commercially deployed quantum computer, H1.

- Anna Dalmasso from the University of Nottingham received our ‘New Student Award’ for her impressive debut project on Quantinuum hardware and for delivering outstanding results as a new Q-Net student user.

Congratulations, again, and thank you to everyone who contributed to the success of the first Q-Net Connect!

Become a Q-Net Member

Q-Net offers year‑round support through user access, developer tools, documentation, trainings, webinars, and events. Members enjoy many exclusive benefits, including being the first to hear about exclusive content, publications and promotional offers.

By joining the community, you will be invited to exclusive gatherings to hear about the latest breakthroughs and connect with industry experts driving quantum innovation. Members also get access to Q‑Net Connect recordings and stay connected for future community updates.

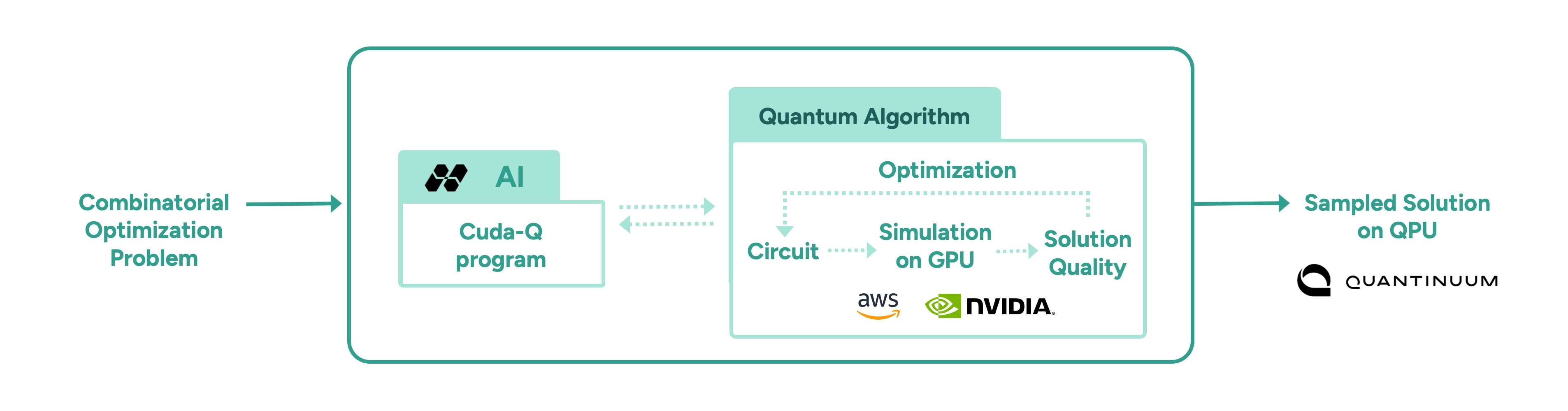

In a follow-up to our recent work with Hiverge using AI to discover algorithms for quantum chemistry, we’ve teamed up with Hiverge, Amazon Web Services (AWS) and NVIDIA to explore using AI to improve algorithms for combinatorial optimization.

With the rapid rise of Large Language Models (LLMs), people started asking “what if AI agents can serve as on-demand algorithm factories?” We have been working with Hiverge, an algorithm discovery company, AWS, and NVIDIA, to explore how LLMs can accelerate quantum computing research.

Hiverge – named for Hive, an AI that can develop algorithms – aims to make quantum algorithm design more accessible to researchers by translating high-level problem descriptions in mostly natural language into executable quantum circuits. The Hive takes the researcher’s initial sketch of an algorithm, as well as special constraints the researcher enumerates, and evolves it to a new algorithm that better meets the researcher’s needs. The output is expressed in terms of a familiar programming language, like Guppy or NVIDIA CUDA-Q, making it particularly easy to implement.

The AI is called a “Hive” because it is a collective of LLM agents, all of whom are editing the same codebase. In this work, the Hive was made up of LLM powerhouses such as Gemini, ChatGPT, Claude, Llama, as well as NVIDIA Nemotron, which was accessed through AWS’ Amazon Bedrock service. Many models are included because researchers know that diversity is a strength – just like a team of human researchers working in a group, a variety of perspectives often leads to the strongest result.

Once the LLMs are assembled, the Hive calls on them to do the work writing the desired algorithm; no new training is required. The algorithms are then executed and their ‘fitness’ (how well they solve the problem) is measured. Unfit programs do not survive, while the fittest ones evolve to the next generation. This process repeats, much like the evolutionary process of nature itself.

After evolution, the fittest algorithm is selected by the researchers and tested on other instances of the problem. This is a crucial step as the researchers want to understand how well it can generalize.

In this most recent work, the joint team explored how AI can assist in the discovery of heuristic quantum optimization algorithms, a class of algorithms aimed at improving efficiency across critical workstreams. These span challenges like optimal power grid dispatch and storage placement, arranging fuel inside nuclear reactors, and molecular design and reaction pathway optimization in drug, material, and chemical discovery—where solutions could translate into maximizing operational efficiency, dramatic reduction in costs, and rapid acceleration in innovation.

In other AI approaches, such as reinforcement learning, models are trained to solve a problem, but the resulting "algorithm" is effectively ‘hidden’ within a neural network. Here, the algorithm is written in Guppy or CUDA-Q (or Python), making it human-interpretable and easier to deploy on new problem instances.

This work leveraged the NVIDIA CUDA-Q platform, running on powerful NVIDIA GPUs made accessible by AWS. It’s state-of-the art accelerated computing was crucial; the research explored highly complex problems, challenges that lie at the edge of classical computing capacity. Before running anything on Quantinuum’s quantum computer, the researchers first used NVIDIA accelerated computing to simulate the quantum algorithms and assess their fitness. Once a promising algorithm is discovered, it could then be deployed on quantum hardware, creating an exciting new approach for scaling quantum algorithm design.

More broadly, this work points to one of many ways in which classical compute, AI, and quantum computing are most powerful in symbiosis. AI can be used to improve quantum, as demonstrated here, just as quantum can be used to extend AI. Looking ahead, we envision AI evolving programs that express a combination of algorithmic primitives, much like human mathematicians, such as Peter Shor and Lov Grover, have done. After all, both humans and AI can learn from each other.

As quantum computing power grows, so does the difficulty of error correction. Meeting that demand requires tight integration with high-performance classical computing, which is why we’ve partnered with NVIDIA to push the boundaries of real-time decoding performance.

Realizing the full power of quantum computing requires more than just qubits, it requires error rates low enough to run meaningful algorithms at scale. Physical qubits are sensitive to noise, which limits their capacity to handle calculations beyond a certain scale. To move beyond these limits, physical qubits must be combined into logical qubits, with errors continuously detected and corrected in real time before they can propagate and corrupt the calculation. This approach, known as fault tolerance, is a foundational requirement for any quantum computer intended to solve problems of real-world significance.

Part of the challenge of fault tolerance is the computational complexity of correcting errors in real time. Doing so involves sending the error syndrome data to a classical co-processor, solving a complex mathematical problem on that processor, then sending the resulting correction back to the quantum processor - all fast enough that it doesn’t slow down the quantum computation. For this reason, Quantum Error Correction (QEC) is currently one of the most demanding use-cases for tight coupling between classical and quantum computing.

Given the difficulty of the task, we have partnered with NVIDIA, leaders in accelerated computing. With the help of NVIDIA’s ultra-fast GPUs (and the GPU-accelerated BP-OSD decoder developed by NVIDIA as part of NVIDIA CUDA-Q QEC library), we were able to demonstrate real-time decoding of Helios’ qubits, all in a system that can be connected directly to our quantum processors using NVIDIA NVQLink.

While real-time decoding has been demonstrated before (notably, by our own scientists in this study), previous demonstrations were limited in their scalability and complexity.

In this demonstration, we used Brings’ code, a high-rate code that is possible with our all-to-all connectivity, to encode our physical qubits into noise-resilient logical qubits. Once we had them encoded, we ran gates as well as let them idle to see if we could catch and correct errors quickly and efficiently. We submitted the circuits via both NVIDIA CUDA-Q as well as our own Guppy language, underlining our commitment to accessible, ecosystem-friendly quantum computing.

The results were excellent: we were able to perform low-latency decoding that returned results in the time we needed, even for the faster clock cycles that we expect in future generation machines.

A key part of the achievement here is that we performed something called “correlated” decoding. In correlated decoding, you offload work that would normally be performed on the QPU onto the classical decoder. This is because, in ‘standard’ decoding, as you improve your error correction capabilities, it takes more and more time on the QPU. Correlated decoding elides this cost, saving QPU time for the tasks that only the quantum computer can do.

Stay tuned for our forthcoming paper with all the details.