Quantinuum Researchers Demonstrate a new Optimization Algorithm that delivers solutions on H2 Quantum Computer

Algorithm reliably and consistently solves combinatorial optimization problems using minimal quantum resources

In a meaningful advance in an important area of industrial and real-world relevance, Quantinuum researchers have demonstrated a quantum algorithm capable of solving complex combinatorial optimization problems while making the most of available quantum resources.

Results on the new H2 quantum computer evidenced a remarkable ability to solve combinatorial optimization problems with as few quantum resources as those employed by just one layer of the quantum approximate optimization algorithm (QAOA), the current and traditional workhorse of quantum heuristic algorithms.

Optimization problems are common in industry in contexts such as route planning, scheduling, cost optimization and logistics. However, as the number of variables increases and optimization problems grow larger and more complex, finding satisfactory solutions using classical algorithms becomes increasingly difficult.

Recent research suggests that certain quantum algorithms might be capable of solving combinatorial optimization problems better than classical algorithms. The realization of such quantum algorithms can therefore potentially increase the efficiency of industrial processes.

However, the effectiveness of these algorithms on near-term quantum devices and even on future generations of more capable quantum computers presents a technical challenge: quantum resources will need to be reduced as much as possible in order to protect the quantum algorithm from the unavoidable effects of quantum noise.

Sebastian Leontica and Dr. David Amaro, a senior research scientist at Quantinuum, explain their advances in a new paper, “Exploring the neighborhood of 1-layer QAOA with Instantaneous Quantum Polynomial circuits” published on arXiv. This is one of several papers published at the launch of Quantinuum’s H2, that highlight the unparalleled power of the newest generation of the H-Series, Powered by Honeywell.

“We should strive to use as few quantum resources as possible no matter how good a quantum computer we are operating on, which means using the smallest possible number of qubits that fit within the problem size and a circuit that is as shallow as possible,” Dr. Amaro said. “Our algorithm uses the fewest possible resources and still achieves good performance.”

The researchers use a parameterized instantaneous quantum polynomial (IQP) circuit of the same depth as the 1-layer QAOA to incorporate corrections that would otherwise require multiple layers. Another differentiating feature of the algorithm is that the parameters in the IQP circuit can be efficiently trained on a classical computer, avoiding some training issues of other algorithms like QAOA. Critically, the circuit takes full advantage of, and benefits from features available on Quantinuum’s devices, including parameterized two-qubit gates, all-to-all connectivity, and high-fidelity operations.

“Our numerical simulations and experiments on the new H2 quantum computer at small scale indicate that this heuristic algorithm, compared to 1-layer QAOA, is expected to amplify the probability of sampling good or even optimal solutions of large optimization problems,” Dr. Amaro said. “We now want to understand how the solution quality and runtime of our algorithm compares to the best classical algorithms.”

This algorithm will be useful for current quantum computers as well as larger machines farther along the Quantinuum hardware roadmap.

How the Experiment Worked

The goal of this project was to provide a quantum heuristic algorithm for combinatorial optimization that returns better solutions for optimization problems and uses fewer quantum resources than state of the art quantum heuristics. The researchers used a fully connected parameterized IQP, warm-started from 1-layer QAOA. For a problem with n binary variables the circuit contained up to n(n-1)/2 two-qubit gates and the researchers employed only 20.32n shots.

The algorithm showed improved performance on the Sherrington-Kirkpatrick (SK) optimization problem compared to the 1-layer QAOA. Numerical simulations showed an average speed up of 20.31n compared to 20.5n when looking for the optimal solution.

Experimental results on our new H2 quantum computer and emulator confirmed that the new optimization algorithm outperforms 1-layer QAOA and reliably solves complex optimization problems. The optimal solution was found for 136 out of 312 instances, four of which were for the maximum size of 32 qubits. A 30-qubit instance was solved optimally on the H2 device, which means, remarkably, that at least one of the 776 shots measured after performing 432 two-qubit gates corresponds to the unique optimal solution in the huge set of 230 > 109 candidate solutions.

These results indicate that the algorithm, in combination with H2 hardware, is capable of solving hard optimization problems using minimal quantum resources in the presence of real hardware noise.

Quantinuum researchers expect that these promising results at small scale will encourage the further study of new quantum heuristic algorithms at the relevant scale for real-world optimization problems, which requires a better understanding of their performance under realistic conditions.

Speedup of IQP over QAOA

Numerical simulations of 256 SK random instances for each problem size from 4 to 29 qubits. Graph A shows the probability of sampling the optimal solution in the IQP circuit, for which the average is 2-0.31n. Graph B shows the enhancement factor compared to 1-layer QAOA, for which the average is 20.23n. These results indicate that Quantinuum’s algorithm has significantly better runtime than 1-layer QAOA.

About Quantinuum

Quantinuum, the world’s largest integrated quantum company, pioneers powerful quantum computers and advanced software solutions. Quantinuum’s technology drives breakthroughs in materials discovery, cybersecurity, and next-gen quantum AI. With over 500 employees, including 370+ scientists and engineers, Quantinuum leads the quantum computing revolution across continents.

- University of Southern Denmark (SDU) to use Quantinuum Helios, supported by the Danish e-Infrastructure Consortium (DeiC)

- Access to Helios enables SDU to test and refine fault-tolerant algorithms and error-correction codes under realistic hardware conditions

- The collaboration supports at a scale of 48 logical qubits, positioning Denmark at the forefront of scalable, practical quantum computing

- Researchers exploring the scientific foundations for future development of applications in fields including pharmaceuticals, finance, and defense

Progress in quantum computing is measured by hardware advances plus the algorithms and quantum error-correction codes that turn quantum systems into useful computational tools.

Thanks to recent hardware advances, researchers are increasingly sharpening their tools to probe the performance of quantum algorithms and understand how they behave in realistic conditions – where stability, system architecture and algorithm design all shape performance.

A new Denmark-based collaboration between the University of Southern Denmark (SDU), Quantinuum, and the Danish e-Infrastructure Consortium (DeiC) will utilize Quantinuum Helios. Researchers at the SDU’s Centre for Quantum Mathematics, led by Jørgen Ellegaard Andersen, will use Helios to pursue research into topological quantum computing.

Their work could help explain how and why successful quantum algorithms perform as they do, informing the development of high-performance algorithms suited to emerging quantum systems. They’re exploring the scientific foundations that support future quantum applications across areas including pharmaceuticals, finance, and defense.

“We are thrilled to gain access to Quantinuum’s high-fidelity Helios system. This collaboration gives us a unique opportunity to test the limits of our algorithms and evaluate system performance, while advancing fundamental research and laying the foundation for future applications.”

— Professor Jørgen Ellegaard Andersen, Director of the Centre for Quantum Mathematics at University of Southern Denmark

Why topological methods matter

Topological quantum computing is an area of research that connects quantum computation with deep mathematical structures. It includes the study of error correcting codes known as surface codes that encode quantum information in the global properties of systems of logical qubits.

The research team will explore how these codes behave, and how they may support the development of fault-tolerant quantum algorithms in practical implementations under realistic conditions.

This distinction between theory and practical implementation matters. In theory, topological approaches offer a rich framework for designing algorithms and error-correcting codes. In practice, researchers need to understand how those ideas perform when implemented on real systems, where questions of noise, stability, overhead, and scaling become central. The collaboration will allow the SDU team to investigate these questions directly.

New ways to benchmark quantum processors

Beyond individual algorithms and codes, the research will also develop tools for benchmarking quantum processors. The goal is to develop new ways to characterize fidelity and stability in regimes that can be difficult to access.

The team will also explore hybrid quantum–classical approaches, including machine-learning techniques assisted by quantum hardware, to study the mathematical structures at the heart of topological quantum computing. This work reflects a broader field of research in which quantum and classical methods are used together, each contributing to parts of a computational problem.

Strengthening Denmark’s quantum ecosystem

The collaboration reflects the growing role of national quantum infrastructure in supporting research and talent development. Denmark has a long tradition of scientific innovation, and this collaboration is intended to support the country’s continued development in quantum technology.

The initiative is supported by DeiC, which played a central role in securing funding and enabling access to Quantinuum’s systems. DeiC has been assigned a particular role in developing and coordinating quantum infrastructure initiatives for the benefit of universities and industry, operating without its own commercial, sectoral, or geographical interests. This includes securing dedicated access to quantum computers, producing advisory services and supporting the development of new talent in the Danish quantum sector.

“DeiC’s special effort to secure funding and access for this research initiative is rooted in our organization’s role in relation to the Danish Government’s strategy for quantum technology.”

— Henrik Navntoft Sønderskov, Head of Quantum at Danish e-Infrastructure Consortium

This collaboration promises to accelerate the development of practical algorithms. It is grounded in fundamental science – but its focus is practical: discovering and testing mathematical approaches to topological quantum computing that can be implemented, evaluated, and improved on real quantum hardware.

That work requires both theoretical insight and access to a system such as Helios capable of supporting meaningful scientific work.

This month, Quantinuum welcomed its global user community to the first-ever Q-Net Connect, an annual forum designed to spark collaboration, share insights, and accelerate innovation across our full-stack quantum computing platforms. Over two days, users came together not only to learn from one another, but to build the relationships and momentum that we believe will help define the next chapter of quantum computing.

Q-Net Connect 2026 drew over 170 attendees from around the world to Denver, Colorado, including representatives from commercial enterprises and startups, academia and research institutions, and the public sector and non-profits - all users of Quantinuum systems.

The program was packed with inspiring keynotes, technical tracks, and customer presentations. Attendees heard from leaders at Quantinuum, as well as our partners at NVIDIA, JPMorganChase and BlueQubit; professors from the University of New Mexico, the University of Nottingham and Harvard University; national labs, including NIST, Oak Ridge National Laboratory, Sandia National Laboratories and Los Alamos National Laboratory; and other distinguished guests from across the global quantum ecosystem.

Congratulations to Q-Net Connect 2026 Award Recipients!

The mission of the Quantinuum Q-Net user community is to create a space for shared learning, collaboration and connection for those who adopt Quantinuum’s hardware, software and middleware platform. At this year’s Q-Net Connect, we awarded four organizations who made notable efforts to champion this effort.

- JPMorganChase received the ‘Guppy Adopter Award’ for their exemplary adoption of our quantum programming language, Guppy, in their research workflows.

- Phasecraft, a UK and US-based quantum algorithms startup, received the ‘Rising Star’ award for demonstrating exceptional early impact and advancing science using Quantinuum hardware, which they published in a December 2025 paper.

- Qedma, a quantum software startup, received the ‘Startup Partner Engagement’ award for their sustained engagement with Quantinuum platforms dating back to our first commercially deployed quantum computer, H1.

- Anna Dalmasso from the University of Nottingham received our ‘New Student Award’ for her impressive debut project on Quantinuum hardware and for delivering outstanding results as a new Q-Net student user.

Congratulations, again, and thank you to everyone who contributed to the success of the first Q-Net Connect!

Become a Q-Net Member

Q-Net offers year‑round support through user access, developer tools, documentation, trainings, webinars, and events. Members enjoy many exclusive benefits, including being the first to hear about exclusive content, publications and promotional offers.

By joining the community, you will be invited to exclusive gatherings to hear about the latest breakthroughs and connect with industry experts driving quantum innovation. Members also get access to Q‑Net Connect recordings and stay connected for future community updates.

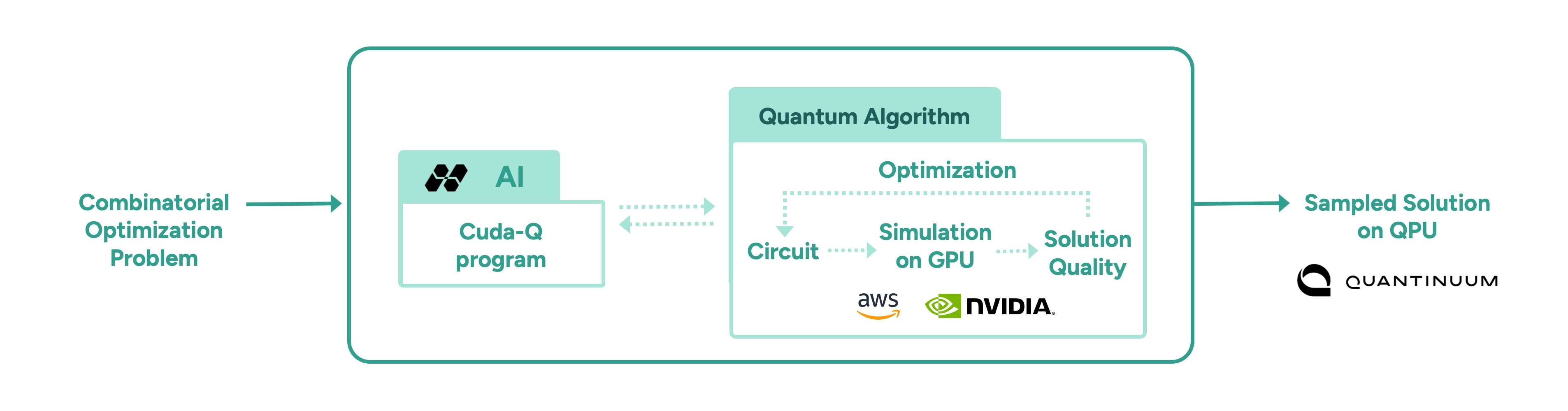

In a follow-up to our recent work with Hiverge using AI to discover algorithms for quantum chemistry, we’ve teamed up with Hiverge, Amazon Web Services (AWS) and NVIDIA to explore using AI to improve algorithms for combinatorial optimization.

With the rapid rise of Large Language Models (LLMs), people started asking “what if AI agents can serve as on-demand algorithm factories?” We have been working with Hiverge, an algorithm discovery company, AWS, and NVIDIA, to explore how LLMs can accelerate quantum computing research.

Hiverge – named for Hive, an AI that can develop algorithms – aims to make quantum algorithm design more accessible to researchers by translating high-level problem descriptions in mostly natural language into executable quantum circuits. The Hive takes the researcher’s initial sketch of an algorithm, as well as special constraints the researcher enumerates, and evolves it to a new algorithm that better meets the researcher’s needs. The output is expressed in terms of a familiar programming language, like Guppy or NVIDIA CUDA-Q, making it particularly easy to implement.

The AI is called a “Hive” because it is a collective of LLM agents, all of whom are editing the same codebase. In this work, the Hive was made up of LLM powerhouses such as Gemini, ChatGPT, Claude, Llama, as well as NVIDIA Nemotron, which was accessed through AWS’ Amazon Bedrock service. Many models are included because researchers know that diversity is a strength – just like a team of human researchers working in a group, a variety of perspectives often leads to the strongest result.

Once the LLMs are assembled, the Hive calls on them to do the work writing the desired algorithm; no new training is required. The algorithms are then executed and their ‘fitness’ (how well they solve the problem) is measured. Unfit programs do not survive, while the fittest ones evolve to the next generation. This process repeats, much like the evolutionary process of nature itself.

After evolution, the fittest algorithm is selected by the researchers and tested on other instances of the problem. This is a crucial step as the researchers want to understand how well it can generalize.

In this most recent work, the joint team explored how AI can assist in the discovery of heuristic quantum optimization algorithms, a class of algorithms aimed at improving efficiency across critical workstreams. These span challenges like optimal power grid dispatch and storage placement, arranging fuel inside nuclear reactors, and molecular design and reaction pathway optimization in drug, material, and chemical discovery—where solutions could translate into maximizing operational efficiency, dramatic reduction in costs, and rapid acceleration in innovation.

In other AI approaches, such as reinforcement learning, models are trained to solve a problem, but the resulting "algorithm" is effectively ‘hidden’ within a neural network. Here, the algorithm is written in Guppy or CUDA-Q (or Python), making it human-interpretable and easier to deploy on new problem instances.

This work leveraged the NVIDIA CUDA-Q platform, running on powerful NVIDIA GPUs made accessible by AWS. It’s state-of-the art accelerated computing was crucial; the research explored highly complex problems, challenges that lie at the edge of classical computing capacity. Before running anything on Quantinuum’s quantum computer, the researchers first used NVIDIA accelerated computing to simulate the quantum algorithms and assess their fitness. Once a promising algorithm is discovered, it could then be deployed on quantum hardware, creating an exciting new approach for scaling quantum algorithm design.

More broadly, this work points to one of many ways in which classical compute, AI, and quantum computing are most powerful in symbiosis. AI can be used to improve quantum, as demonstrated here, just as quantum can be used to extend AI. Looking ahead, we envision AI evolving programs that express a combination of algorithmic primitives, much like human mathematicians, such as Peter Shor and Lov Grover, have done. After all, both humans and AI can learn from each other.