How we equip our users to unlock the full potential of H-Series Quantum Computers

Quantinuum is proud to introduce three new tools to help enterprise and academic users to make full use of the world-leading capabilities of the System Model H1 and H2 quantum computers

In a series of recent technical papers, Quantinuum researchers demonstrated the world-leading capabilities of the latest H-Series quantum computers, and the features and tools that make these accessible to our global customers and users.

Our teams used the H-Series quantum computers to directly measure and control non-abelian topological states of matter [1] for the first time, explore new ways to solve combinatorial optimization problems more efficiently [2], simulate molecular systems using logical qubits with error detection [3], probe critical states of matter [4], as well as exhaustively benchmark our very latest system [5].

Part of what makes such rapid technical and scientific progress possible is the effort our teams continually make to develop and improve workflow tools, helping our users to achieve successful results. In this blog post, we will explore the capabilities of three new tools in some detail, discuss their significance, and highlight their impact in recent quantum computing research.

Leakage Detection Gadget in pyTKET

“Leakage” is a quantum error process where a qubit ends up in a state outside the computational subspace and can significantly impact quantum computations. To address this issue, Quantinuum has developed a leakage detection gadget in pyTKET, a python module for interfacing with TKET, our quantum computing toolkit and optimizing compiler. This gadget, presented at the 2022 IEEE International Conference [6], acts as an error detection technique: it detects and excludes results affected by leakage, minimizing its impact on computations. It is also a valuable tool for measuring single-qubit and two-qubit spontaneous emission rates. H-Series users can access this open-source gadget through pyTKET, and an example notebook is available on the pyTKET GitHub repository.

Mid-Circuit Measurement and Qubit Reuse (MCMR) Package

The MCMR package, built as a pyTKET compiler pass, is designed to reduce the number of qubits required for executing many types of quantum algorithms, expanding the scope of what is possible on the current-generation H-Series quantum computers.

As an example, in a recent paper [4], Quantinuum researchers applied this tool to simulate the transverse-field Ising model and used only 20 qubits to simulate a much larger 128 site system (there is more detail below on this work). By measuring qubits early in the circuit, resetting them, and reusing them elsewhere, the package ingests a raw circuit and outputs an optimized circuit that requires fewer quantum resources. Previously, a scientific paper [7] and blog post on MCMR were published highlighting its benefits and applications. H-Series customers can download this package via the Quantinuum user portal.

Quantinuum H2-1 Emulator Release

To enable efficient use of Quantinuum’s 2nd generation processor, the System Model H2, Quantinuum has released the H2-1 emulator to give users greater flexibility with noise-informed state vector emulation. This emulator uses the NVIDIA's cuQuantum SDK to accelerate quantum computing simulation workflows, nearly approaching the limit of full state emulation on conventional classical hardware. The emulator is a faithful representation of the QPU it emulates. This is accomplished by not only using realistic noise models and noise parameters, but also by sharing the same software stack between the QPU and the emulator up until the job is either routed to the QPU or the classical computing processors. Most notable is that the emulator and the QPU use the same compiler allowing subtle and time-dependent errors to be appropriately represented. The H2-1 emulator was initially released as a beta product alongside the System Model H2 quantum computer at launch. It runs on a GPU backend and an upgraded global framework now offering features such as job chunking, incremental resource distribution, mid-execution job cancellation, and partial result return. Detailed information about the emulator can be found in the H2 emulator product datasheet on the Quantinuum website. H-Series customers with an H2 subscription can access the H2-1 emulator via an API or the Microsoft Azure platform.

Enabling Recent Works

Quantinuum's new enabling tools have already demonstrated their efficacy and value in recent quantum computing research, playing a vital role in advancing the field and achieving groundbreaking results. Let's expand on some notable recent examples.

All works presented here benefited from having access to our H-Series emulators; of these two significant demonstrations were the “Creation of Non-Abelian Topological Order and Anyons on a Trapped-Ion Processor” [1] and “Demonstration of improved 1-layer QAOA with Instantaneous Quantum Polynomial” [2]. These demonstrations involved extensive testing, debugging, and experiment design, for which the versatility of the H2-1 emulator proved invaluable, providing initial performance benchmarks in a realistic noisy environment. Researchers relied on the emulator's results to gauge algorithmic performance and make necessary adjustments. By leveraging the emulator's capabilities, researchers were able to accelerate their progress.

The MCMR package was extensively used in benchmarking the System Model H2 quantum computer’s world-leading capabilities [5]. Two application-level benchmarks performed in this work, approximating the solution to a MaxCut combinatorics problem using the quantum approximate optimization algorithm (QAOA) and accurately simulating a quantum dynamics model using a holographic quantum dynamics (HoloQUADS) algorithm, would have been too large to encode on H2's 32 qubits without the MCMR package. Further illustrating the overall value of these tools, in the HoloQUADS benchmark, there is a "bond qubit" that is particularly susceptible to errors due to leakage. The leakage detection gadget was used on this "bond qubit" at the end of the circuit, and any shots with a detected leakage error were discarded. The leakage detection gadget was also used to obtain the rate of leakage error per single-qubit and two-qubit gates, two component-level benchmarks.

In another scientific work [4], the MCMR compilation tool proved instrumental to simulating a transverse-field Ising model on 128 sites, using 20 qubits. With the MCMR package and by leveraging a state-of-the-art classical tensor-network ansatz expressed as a quantum circuit, the Quantinuum team was able to express the highly entangled ground state of the critical Ising model. The team showed that with H1-1's 20 qubits, the properties of this state could be measured on a 128-site system with very high fidelity, enabling a quantitatively accurate extraction of some critical properties of the model.

Key Takeaways

At Quantinuum, we are entirely devoted to producing a quantum hardware, middleware and software stack that leads the world on the most important benchmarks and includes features and tools that provide breakthrough benefit to our growing base of users. In today's NISQ hardware, "benefit" usually takes the form of getting the most performance out of today’s hardware, continually pushing what is considered to be possible. In this blog we describe two examples: error detection and discard using the “leakage detection gadget” and an automated method for circuit optimization for qubit reuse. “Benefit” can also take other forms, such as productivity. Our emulator brings many benefits to our users, but one that resonates the most is productivity. Being a faithful representation of our QPU performance, the emulator is an accessible tool which users have at their disposal to develop and test new, innovative algorithms. The tools and features Quantinuum releases are driven by users’ feedback; whether you are new to H-Series or a seasoned user, please reach-out and let us know how we can help bring benefit to your research and use case.

Footnotes:

[1] Mohsin Iqbal et al., Creation of Non-Abelian Topological Order and Anyons on a Trapped-Ion Processor (2023), arXiv:2305.03766 [quant-ph]

[2] Sebastian Leontica and David Amaro, Exploring the neighborhood of 1-layer QAOA with Instantaneous Quantum Polynomial circuits (2022), arXiv:2210.05526 [quant-ph]

[3] Kentaro Yamamoto, Samuel Duffield, Yuta Kikuchi, and David Muñoz Ramo, Demonstrating Bayesian Quantum Phase Estimation with Quantum Error Detection (2023), arXiv:2306.16608 [quant-ph]

[4] Reza Haghshenas, et al., Probing critical states of matter on a digital quantum computer (2023),

arXiv:2305.01650 [quant-ph]

[5] S. A. Moses, et al., A Race Track Trapped-Ion Quantum Processor (2023), arXiv:2305.03828 [quant-ph]

[6] K. Mayer, Mitigating qubit leakage errors in quantum circuits with gadgets and post-selection, 2022 IEEE International Conference on Quantum Computing and Engineering (QCE), Broomfield, CO, USA, (2022), pp. 809-809, doi: 10.1109/QCE53715.2022.00126.

[7] Matthew DeCross, Eli Chertkov, Megan Kohagen, and Michael Foss-Feig, Qubit-reuse compilation with mid-circuit measurement and reset (2022), arXiv:2210.08039 [quant-ph]

About Quantinuum

Quantinuum, the world’s largest integrated quantum company, pioneers powerful quantum computers and advanced software solutions. Quantinuum’s technology drives breakthroughs in materials discovery, cybersecurity, and next-gen quantum AI. With over 500 employees, including 370+ scientists and engineers, Quantinuum leads the quantum computing revolution across continents.

This month, Quantinuum welcomed its global user community to the first-ever Q-Net Connect, an annual forum designed to spark collaboration, share insights, and accelerate innovation across our full-stack quantum computing platforms. Over two days, users came together not only to learn from one another, but to build the relationships and momentum that we believe will help define the next chapter of quantum computing.

Q-Net Connect 2026 drew over 170 attendees from around the world to Denver, Colorado, including representatives from commercial enterprises and startups, academia and research institutions, and the public sector and non-profits - all users of Quantinuum systems.

The program was packed with inspiring keynotes, technical tracks, and customer presentations. Attendees heard from leaders at Quantinuum, as well as our partners at NVIDIA, JPMorganChase and BlueQubit; professors from the University of New Mexico, the University of Nottingham and Harvard University; national labs, including NIST, Oak Ridge National Laboratory, Sandia National Laboratories and Los Alamos National Laboratory; and other distinguished guests from across the global quantum ecosystem.

Congratulations to Q-Net Connect 2026 Award Recipients!

The mission of the Quantinuum Q-Net user community is to create a space for shared learning, collaboration and connection for those who adopt Quantinuum’s hardware, software and middleware platform. At this year’s Q-Net Connect, we awarded four organizations who made notable efforts to champion this effort.

- JPMorganChase received the ‘Guppy Adopter Award’ for their exemplary adoption of our quantum programming language, Guppy, in their research workflows.

- Phasecraft, a UK and US-based quantum algorithms startup, received the ‘Rising Star’ award for demonstrating exceptional early impact and advancing science using Quantinuum hardware, which they published in a December 2025 paper.

- Qedma, a quantum software startup, received the ‘Startup Partner Engagement’ award for their sustained engagement with Quantinuum platforms dating back to our first commercially deployed quantum computer, H1.

- Anna Dalmasso from the University of Nottingham received our ‘New Student Award’ for her impressive debut project on Quantinuum hardware and for delivering outstanding results as a new Q-Net student user.

Congratulations, again, and thank you to everyone who contributed to the success of the first Q-Net Connect!

Become a Q-Net Member

Q-Net offers year‑round support through user access, developer tools, documentation, trainings, webinars, and events. Members enjoy many exclusive benefits, including being the first to hear about exclusive content, publications and promotional offers.

By joining the community, you will be invited to exclusive gatherings to hear about the latest breakthroughs and connect with industry experts driving quantum innovation. Members also get access to Q‑Net Connect recordings and stay connected for future community updates.

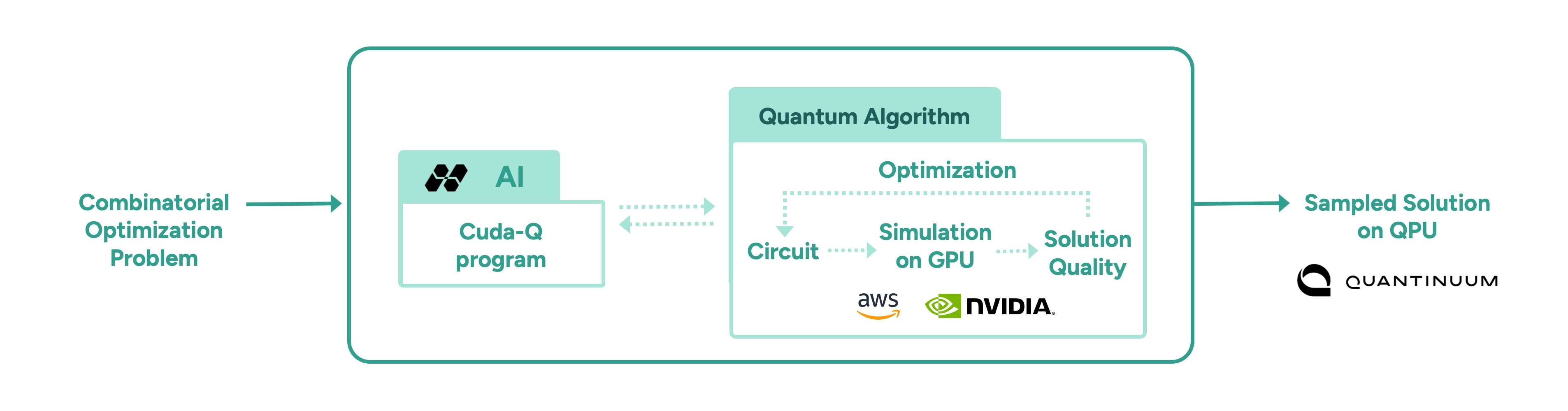

In a follow-up to our recent work with Hiverge using AI to discover algorithms for quantum chemistry, we’ve teamed up with Hiverge, Amazon Web Services (AWS) and NVIDIA to explore using AI to improve algorithms for combinatorial optimization.

With the rapid rise of Large Language Models (LLMs), people started asking “what if AI agents can serve as on-demand algorithm factories?” We have been working with Hiverge, an algorithm discovery company, AWS, and NVIDIA, to explore how LLMs can accelerate quantum computing research.

Hiverge – named for Hive, an AI that can develop algorithms – aims to make quantum algorithm design more accessible to researchers by translating high-level problem descriptions in mostly natural language into executable quantum circuits. The Hive takes the researcher’s initial sketch of an algorithm, as well as special constraints the researcher enumerates, and evolves it to a new algorithm that better meets the researcher’s needs. The output is expressed in terms of a familiar programming language, like Guppy or NVIDIA CUDA-Q, making it particularly easy to implement.

The AI is called a “Hive” because it is a collective of LLM agents, all of whom are editing the same codebase. In this work, the Hive was made up of LLM powerhouses such as Gemini, ChatGPT, Claude, Llama, as well as NVIDIA Nemotron, which was accessed through AWS’ Amazon Bedrock service. Many models are included because researchers know that diversity is a strength – just like a team of human researchers working in a group, a variety of perspectives often leads to the strongest result.

Once the LLMs are assembled, the Hive calls on them to do the work writing the desired algorithm; no new training is required. The algorithms are then executed and their ‘fitness’ (how well they solve the problem) is measured. Unfit programs do not survive, while the fittest ones evolve to the next generation. This process repeats, much like the evolutionary process of nature itself.

After evolution, the fittest algorithm is selected by the researchers and tested on other instances of the problem. This is a crucial step as the researchers want to understand how well it can generalize.

In this most recent work, the joint team explored how AI can assist in the discovery of heuristic quantum optimization algorithms, a class of algorithms aimed at improving efficiency across critical workstreams. These span challenges like optimal power grid dispatch and storage placement, arranging fuel inside nuclear reactors, and molecular design and reaction pathway optimization in drug, material, and chemical discovery—where solutions could translate into maximizing operational efficiency, dramatic reduction in costs, and rapid acceleration in innovation.

In other AI approaches, such as reinforcement learning, models are trained to solve a problem, but the resulting "algorithm" is effectively ‘hidden’ within a neural network. Here, the algorithm is written in Guppy or CUDA-Q (or Python), making it human-interpretable and easier to deploy on new problem instances.

This work leveraged the NVIDIA CUDA-Q platform, running on powerful NVIDIA GPUs made accessible by AWS. It’s state-of-the art accelerated computing was crucial; the research explored highly complex problems, challenges that lie at the edge of classical computing capacity. Before running anything on Quantinuum’s quantum computer, the researchers first used NVIDIA accelerated computing to simulate the quantum algorithms and assess their fitness. Once a promising algorithm is discovered, it could then be deployed on quantum hardware, creating an exciting new approach for scaling quantum algorithm design.

More broadly, this work points to one of many ways in which classical compute, AI, and quantum computing are most powerful in symbiosis. AI can be used to improve quantum, as demonstrated here, just as quantum can be used to extend AI. Looking ahead, we envision AI evolving programs that express a combination of algorithmic primitives, much like human mathematicians, such as Peter Shor and Lov Grover, have done. After all, both humans and AI can learn from each other.

As quantum computing power grows, so does the difficulty of error correction. Meeting that demand requires tight integration with high-performance classical computing, which is why we’ve partnered with NVIDIA to push the boundaries of real-time decoding performance.

Realizing the full power of quantum computing requires more than just qubits, it requires error rates low enough to run meaningful algorithms at scale. Physical qubits are sensitive to noise, which limits their capacity to handle calculations beyond a certain scale. To move beyond these limits, physical qubits must be combined into logical qubits, with errors continuously detected and corrected in real time before they can propagate and corrupt the calculation. This approach, known as fault tolerance, is a foundational requirement for any quantum computer intended to solve problems of real-world significance.

Part of the challenge of fault tolerance is the computational complexity of correcting errors in real time. Doing so involves sending the error syndrome data to a classical co-processor, solving a complex mathematical problem on that processor, then sending the resulting correction back to the quantum processor - all fast enough that it doesn’t slow down the quantum computation. For this reason, Quantum Error Correction (QEC) is currently one of the most demanding use-cases for tight coupling between classical and quantum computing.

Given the difficulty of the task, we have partnered with NVIDIA, leaders in accelerated computing. With the help of NVIDIA’s ultra-fast GPUs (and the GPU-accelerated BP-OSD decoder developed by NVIDIA as part of NVIDIA CUDA-Q QEC library), we were able to demonstrate real-time decoding of Helios’ qubits, all in a system that can be connected directly to our quantum processors using NVIDIA NVQLink.

While real-time decoding has been demonstrated before (notably, by our own scientists in this study), previous demonstrations were limited in their scalability and complexity.

In this demonstration, we used Brings’ code, a high-rate code that is possible with our all-to-all connectivity, to encode our physical qubits into noise-resilient logical qubits. Once we had them encoded, we ran gates as well as let them idle to see if we could catch and correct errors quickly and efficiently. We submitted the circuits via both NVIDIA CUDA-Q as well as our own Guppy language, underlining our commitment to accessible, ecosystem-friendly quantum computing.

The results were excellent: we were able to perform low-latency decoding that returned results in the time we needed, even for the faster clock cycles that we expect in future generation machines.

A key part of the achievement here is that we performed something called “correlated” decoding. In correlated decoding, you offload work that would normally be performed on the QPU onto the classical decoder. This is because, in ‘standard’ decoding, as you improve your error correction capabilities, it takes more and more time on the QPU. Correlated decoding elides this cost, saving QPU time for the tasks that only the quantum computer can do.

Stay tuned for our forthcoming paper with all the details.