KPMG and Microsoft join Quantinuum in simplifying quantum algorithm development via the cloud

The QIR Alliance, an international effort to enhance platform interoperability and enhance the work of quantum computing developers, has announced a milestone in the industry-wide effort to accelerate adoption

In 1952, facing opposition from scientists who disbelieved her thesis that computer programming could be made more useful by using English words, the mathematician and computer scientist Grace Hopper published her first paper on compilers and wrote a precursor to the modern compiler, the A-0, while working at Remington Rand.

Over subsequent decades, the principles of compilers, whose task it is to translate between high level programming languages and machine code, took shape and new methods were introduced to support their optimization. One such innovation was the intermediate representation (IR), which was introduced to manage the complexity of the compilation process, enabling compilers to represent the program without loss of information, and to be broken up into modular phases and components.

This developmental path spawned the modern computer industry, with languages that work across hardware systems, middleware, firmware, operating systems, and software applications. It has also supported the emergence of the huge numbers of small businesses and professionals who make a living collaborating to solve problems using code that depends on compilers to control the underlying computing hardware.

Now, a similar story is unfolding in quantum computing. There are efforts around the world to make it simpler for engineers and developers across many sectors to take advantage of quantum computers by translating between high level coding languages and tools, and quantum circuits — the combinations of gates that run on quantum computers to generate solutions. Many of these efforts focus on hybrid quantum-classical workflows, which allow a problem to be solved by taking advantage of the strengths of different modes of computation, accessing central processing units (CPUs), graphical processing units (GPUs) and quantum processing units (QPUs) as needed.

Microsoft is a significant contributor to this burgeoning quantum ecosystem, providing access to multiple quantum computing systems through Azure Quantum, and a founding member of the QIR Alliance, a cross-industry effort to make quantum computing source code portable across different hardware systems and modalities and to make quantum computing more useful to engineers and developers. QIR offers an interoperable specification for quantum programs, including a hardware profile designed for Quantinuum’s H-Series quantum computers, and has the capacity to support cross-compiling quantum and classical workflows, encouraging hybrid use-cases.

As one of the largest integrated quantum computing companies in the world, Quantinuum was excited to become a QIR steering member alongside partners including Nvidia, Oak Ridge National Laboratory, Quantum Circuits Inc., and Rigetti Computing. Quantinuum supports multiple open-source eco-system tools including its own family of open-source software development kits and compilers, such as TKET for general purpose quantum computation and lambeq for quantum natural language processing.

Rapid progress with KPMG and Microsoft

As founding members of QIR, Quantinuum recently worked with Microsoft Azure Quantum alongside KPMG on a project that involved Microsoft’s Q#, a stand-alone language offering a high level of abstraction and Quantinuum’s System Model H1, Powered by Honeywell. The Q# language has been designed for the specific needs of quantum computing and provides a high-level of abstraction enabling developers to seamlessly blend classical and quantum operations, significantly simplifying the design of hybrid algorithms.

KPMG’s quantum team wanted to translate an existing algorithm into Q#, and to take advantage of the unique and differentiating capabilities of Quantinuum’s H-Series, particularly qubit reuse, mid-circuit measurement and all-to-all connectivity. System Model H1 is the first generation trapped-ion based quantum computer built using the quantum charge-coupled device (QCCD) architecture. KPMG accessed the H1-1 QPU with 20 fully connected qubits. H1-1 recently achieved a Quantum Volume of 32,768, demonstrating a new high-water mark for the industry in terms of computation power as measured by quantum volume.

Q# and QIR offered an abstraction from hardware specific instructions, allowing the KPMG team, led by Michael Egan, to make best use of the H-Series and take advantage of runtime support for measurement-conditioned program flow control, and classical calculations within runtime.

Nathan Rhodes of the KPMG team wrote a tutorial about the project to demonstrate how an algorithm writer would use the KPMG code step-by-step as well as the particular features of QIR, Q# and the H-Series. It is the first time that code from a third party will be available for end users on Microsoft’s Azure portal.

Microsoft recently announced the roll-out of integrated quantum computing on Azure Quantum, an important milestone in Microsoft’s Hybrid Quantum Computing Architecture, which provides tighter integration between quantum and classical processing.

Fabrice Frachon, Principal PM Lead, Azure Quantum, described this new Azure Quantum capability as a key milestone to unlock a new generation of hybrid algorithms on the path to scaled quantum computing.

The demonstration

The team ran an algorithm designed to solve an estimation problem, a promising use case for quantum computing, with potential application in fields including traffic flow, network optimization, energy generation, storage, and distribution, and to solve other infrastructure challenges. The iterative phase estimation algorithm1 was compiled into quantum circuits from code written in a Q# environment with the QIR toolset, producing a circuit with approximately 500 gates, including 111 2-Qubit gates, running across three qubits with one reused three times, and achieving a fidelity of 0.92. This is possible because of the high gate fidelity and the low SPAM error which enables qubit reuse.

The results compare favorably with the more standard Quantum Phase Estimation version described in “Quantum computation and quantum information,” by Michael A. Nielsen and Isaac Chuang.

Quantinuum’s H1 had five capabilities that were crucial to this project:

- Qubit reuse

- Mid-circuit measurement

- Bound loop (a restriction on how many times the system will do the iterative circuit)

- Classical computation

- Nested functions

The project emphasized the importance of companies experimenting with quantum computing, so they can identify any possible IT issues early on, understanding the development environment and how quantum computing integrates with current workflows and processes.

As the global quantum ecosystem continues to advance, collaborative efforts like QIR will play a crucial role in bringing together industrial partners seeking novel solutions to challenging problems, talented developers, engineers, and researchers, and quantum hardware and software companies, which will continue to add deep scientific and engineering knowledge and expertise.

About Quantinuum

Quantinuum, the world’s largest integrated quantum company, pioneers powerful quantum computers and advanced software solutions. Quantinuum’s technology drives breakthroughs in materials discovery, cybersecurity, and next-gen quantum AI. With over 500 employees, including 370+ scientists and engineers, Quantinuum leads the quantum computing revolution across continents.

This month, Quantinuum welcomed its global user community to the first-ever Q-Net Connect, an annual forum designed to spark collaboration, share insights, and accelerate innovation across our full-stack quantum computing platforms. Over two days, users came together not only to learn from one another, but to build the relationships and momentum that we believe will help define the next chapter of quantum computing.

Q-Net Connect 2026 drew over 170 attendees from around the world to Denver, Colorado, including representatives from commercial enterprises and startups, academia and research institutions, and the public sector and non-profits - all users of Quantinuum systems.

The program was packed with inspiring keynotes, technical tracks, and customer presentations. Attendees heard from leaders at Quantinuum, as well as our partners at NVIDIA, JPMorganChase and BlueQubit; professors from the University of New Mexico, the University of Nottingham and Harvard University; national labs, including NIST, Oak Ridge National Laboratory, Sandia National Laboratories and Los Alamos National Laboratory; and other distinguished guests from across the global quantum ecosystem.

Congratulations to Q-Net Connect 2026 Award Recipients!

The mission of the Quantinuum Q-Net user community is to create a space for shared learning, collaboration and connection for those who adopt Quantinuum’s hardware, software and middleware platform. At this year’s Q-Net Connect, we awarded four organizations who made notable efforts to champion this effort.

- JPMorganChase received the ‘Guppy Adopter Award’ for their exemplary adoption of our quantum programming language, Guppy, in their research workflows.

- Phasecraft, a UK and US-based quantum algorithms startup, received the ‘Rising Star’ award for demonstrating exceptional early impact and advancing science using Quantinuum hardware, which they published in a December 2025 paper.

- Qedma, a quantum software startup, received the ‘Startup Partner Engagement’ award for their sustained engagement with Quantinuum platforms dating back to our first commercially deployed quantum computer, H1.

- Anna Dalmasso from the University of Nottingham received our ‘New Student Award’ for her impressive debut project on Quantinuum hardware and for delivering outstanding results as a new Q-Net student user.

Congratulations, again, and thank you to everyone who contributed to the success of the first Q-Net Connect!

Become a Q-Net Member

Q-Net offers year‑round support through user access, developer tools, documentation, trainings, webinars, and events. Members enjoy many exclusive benefits, including being the first to hear about exclusive content, publications and promotional offers.

By joining the community, you will be invited to exclusive gatherings to hear about the latest breakthroughs and connect with industry experts driving quantum innovation. Members also get access to Q‑Net Connect recordings and stay connected for future community updates.

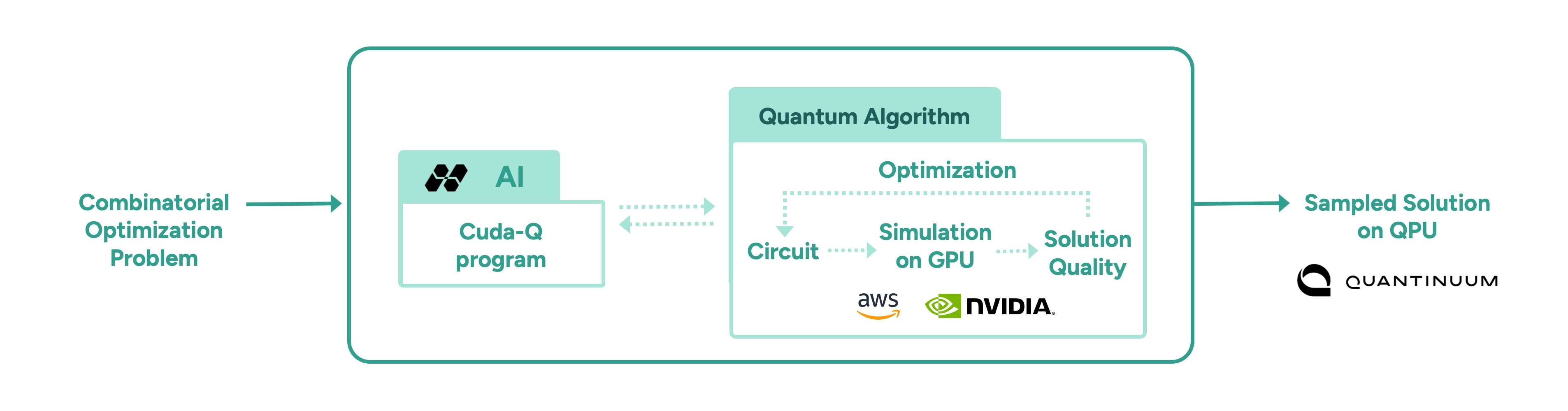

In a follow-up to our recent work with Hiverge using AI to discover algorithms for quantum chemistry, we’ve teamed up with Hiverge, Amazon Web Services (AWS) and NVIDIA to explore using AI to improve algorithms for combinatorial optimization.

With the rapid rise of Large Language Models (LLMs), people started asking “what if AI agents can serve as on-demand algorithm factories?” We have been working with Hiverge, an algorithm discovery company, AWS, and NVIDIA, to explore how LLMs can accelerate quantum computing research.

Hiverge – named for Hive, an AI that can develop algorithms – aims to make quantum algorithm design more accessible to researchers by translating high-level problem descriptions in mostly natural language into executable quantum circuits. The Hive takes the researcher’s initial sketch of an algorithm, as well as special constraints the researcher enumerates, and evolves it to a new algorithm that better meets the researcher’s needs. The output is expressed in terms of a familiar programming language, like Guppy or NVIDIA CUDA-Q, making it particularly easy to implement.

The AI is called a “Hive” because it is a collective of LLM agents, all of whom are editing the same codebase. In this work, the Hive was made up of LLM powerhouses such as Gemini, ChatGPT, Claude, Llama, as well as NVIDIA Nemotron, which was accessed through AWS’ Amazon Bedrock service. Many models are included because researchers know that diversity is a strength – just like a team of human researchers working in a group, a variety of perspectives often leads to the strongest result.

Once the LLMs are assembled, the Hive calls on them to do the work writing the desired algorithm; no new training is required. The algorithms are then executed and their ‘fitness’ (how well they solve the problem) is measured. Unfit programs do not survive, while the fittest ones evolve to the next generation. This process repeats, much like the evolutionary process of nature itself.

After evolution, the fittest algorithm is selected by the researchers and tested on other instances of the problem. This is a crucial step as the researchers want to understand how well it can generalize.

In this most recent work, the joint team explored how AI can assist in the discovery of heuristic quantum optimization algorithms, a class of algorithms aimed at improving efficiency across critical workstreams. These span challenges like optimal power grid dispatch and storage placement, arranging fuel inside nuclear reactors, and molecular design and reaction pathway optimization in drug, material, and chemical discovery—where solutions could translate into maximizing operational efficiency, dramatic reduction in costs, and rapid acceleration in innovation.

In other AI approaches, such as reinforcement learning, models are trained to solve a problem, but the resulting "algorithm" is effectively ‘hidden’ within a neural network. Here, the algorithm is written in Guppy or CUDA-Q (or Python), making it human-interpretable and easier to deploy on new problem instances.

This work leveraged the NVIDIA CUDA-Q platform, running on powerful NVIDIA GPUs made accessible by AWS. It’s state-of-the art accelerated computing was crucial; the research explored highly complex problems, challenges that lie at the edge of classical computing capacity. Before running anything on Quantinuum’s quantum computer, the researchers first used NVIDIA accelerated computing to simulate the quantum algorithms and assess their fitness. Once a promising algorithm is discovered, it could then be deployed on quantum hardware, creating an exciting new approach for scaling quantum algorithm design.

More broadly, this work points to one of many ways in which classical compute, AI, and quantum computing are most powerful in symbiosis. AI can be used to improve quantum, as demonstrated here, just as quantum can be used to extend AI. Looking ahead, we envision AI evolving programs that express a combination of algorithmic primitives, much like human mathematicians, such as Peter Shor and Lov Grover, have done. After all, both humans and AI can learn from each other.

As quantum computing power grows, so does the difficulty of error correction. Meeting that demand requires tight integration with high-performance classical computing, which is why we’ve partnered with NVIDIA to push the boundaries of real-time decoding performance.

Realizing the full power of quantum computing requires more than just qubits, it requires error rates low enough to run meaningful algorithms at scale. Physical qubits are sensitive to noise, which limits their capacity to handle calculations beyond a certain scale. To move beyond these limits, physical qubits must be combined into logical qubits, with errors continuously detected and corrected in real time before they can propagate and corrupt the calculation. This approach, known as fault tolerance, is a foundational requirement for any quantum computer intended to solve problems of real-world significance.

Part of the challenge of fault tolerance is the computational complexity of correcting errors in real time. Doing so involves sending the error syndrome data to a classical co-processor, solving a complex mathematical problem on that processor, then sending the resulting correction back to the quantum processor - all fast enough that it doesn’t slow down the quantum computation. For this reason, Quantum Error Correction (QEC) is currently one of the most demanding use-cases for tight coupling between classical and quantum computing.

Given the difficulty of the task, we have partnered with NVIDIA, leaders in accelerated computing. With the help of NVIDIA’s ultra-fast GPUs (and the GPU-accelerated BP-OSD decoder developed by NVIDIA as part of NVIDIA CUDA-Q QEC library), we were able to demonstrate real-time decoding of Helios’ qubits, all in a system that can be connected directly to our quantum processors using NVIDIA NVQLink.

While real-time decoding has been demonstrated before (notably, by our own scientists in this study), previous demonstrations were limited in their scalability and complexity.

In this demonstration, we used Brings’ code, a high-rate code that is possible with our all-to-all connectivity, to encode our physical qubits into noise-resilient logical qubits. Once we had them encoded, we ran gates as well as let them idle to see if we could catch and correct errors quickly and efficiently. We submitted the circuits via both NVIDIA CUDA-Q as well as our own Guppy language, underlining our commitment to accessible, ecosystem-friendly quantum computing.

The results were excellent: we were able to perform low-latency decoding that returned results in the time we needed, even for the faster clock cycles that we expect in future generation machines.

A key part of the achievement here is that we performed something called “correlated” decoding. In correlated decoding, you offload work that would normally be performed on the QPU onto the classical decoder. This is because, in ‘standard’ decoding, as you improve your error correction capabilities, it takes more and more time on the QPU. Correlated decoding elides this cost, saving QPU time for the tasks that only the quantum computer can do.

Stay tuned for our forthcoming paper with all the details.