Quantum gravity in the lab

In the world of physics, ideas can lie dormant for decades before revealing their true power. What begins as a quiet paper in an academic journal can eventually reshape our understanding of the universe itself.

In 1993, nestled deep in the halls of Yale University, physicist Subir Sachdev and his graduate student Jinwu Ye stumbled upon such an idea. Their work, originally aimed at unraveling the mysteries of “spin fluids”, would go on to ignite one of the most surprising and profound connections in modern physics—a bridge between the strange behavior of quantum materials and the warped spacetime of black holes.

Two decades after the paper was published, it would be pulled into the orbit of a radically different domain: quantum gravity. Thanks to work by renowned physicist Alexei Kitaev in 2015, the model found new life as a testing ground for the mind-bending theory of holography—the idea that the universe we live in might be a projection, from a lower-dimensional reality.

Holography is an exotic approach to understanding reality where scientists use holograms to describe higher dimensional systems in one less dimension. So, if our world is 3+1 dimensional (3 spatial directions plus time), there exists a 2+1, or 3-dimensional description of it. In the words of Leonard Susskind, a pioneer in quantum holography, "the three-dimensional world of ordinary experience—the universe filled with galaxies, stars, planets, houses, boulders, and people—is a hologram, an image of reality coded on a distant two-dimensional surface."

The “SYK” model, as it is known today, is now considered a quintessential framework for studying strongly correlated quantum phenomena, which occur in everything from superconductors to strange metals—and even in black holes. In fact, The SYK model has also been used to study one of physics’ true final frontiers, quantum gravity, with the authors of the paper calling it “a paradigmatic model for quantum gravity in the lab.”

The SYK model involves Majorana fermions, a type of particle that is its own antiparticle. A key feature of the model is that these fermions are all-to-all connected, leading to strong correlations. This connectivity makes the model particularly challenging to simulate on classical computers, where such correlations are difficult to capture. Our quantum computers, however, natively support all-to-all connectivity making them a natural fit for studying the SYK model.

Now, 10 years after Kitaev’s watershed lectures, we’ve made new progress in studying the SYK model. In a new paper, we’ve completed the largest ever SYK study on a quantum computer. By exploiting our system’s native high fidelity and all-to-all connectivity, as well as our scientific team’s deep expertise across many disciplines, we were able to study the SYK model at a scale three times larger than the previous best experimental attempt.

While this work does not exceed classical techniques, it is very close to the classical state-of-the-art. The biggest ever classical study was done on 64 fermions, while our recent result, run on our smallest processor (System Model H1), included 24 fermions. Modelling 24 fermions costs us only 12 qubits (plus one ancilla) making it clear that we can quickly scale these studies: our System Model H2 supports 56 qubits (or ~100 fermions), and Helios, which is coming online this year, will have over 90 qubits (or ~180 fermions).

However, working with the SYK model takes more than just qubits. The SYK model has a complex Hamiltonian that is difficult to work with when encoded on a computer—quantum or classical. Studying the real-time dynamics of the SYK model means first representing the initial state on the qubits, then evolving it properly in time according to an intricate set of rules that determine the outcome. This means deep circuits (many circuit operations), which demand very high fidelity, or else an error will occur before the computation finishes.

Our cross-disciplinary team worked to ensure that we could pull off such a large simulation on a relatively small quantum processor, laying the groundwork for quantum advantage in this field.

First, the team adopted a randomized quantum algorithm called TETRIS to run the simulation. By using random sampling, among other methods, the TETRIS algorithm allows one to compute the time evolution of a system without the pernicious discretization errors or sizable overheads that plague other approaches. TETRIS is particularly suited to simulating the SYK model because with a high level of disorder in the material, simulating the SYK Hamiltonian means averaging over many random Hamiltonians. With TETRIS, one generates random circuits to compute evolution (even with a deterministic Hamiltonian). Therefore, when applying TETRIS on SYK, for every shot one can just generate a random instance of the Hamiltonain, and generate a random circuit on TETRIS at the same time. This simple approach enables less gate counts required per shot, meaning users can run more shots, naturally mitigating noise.

In addition, the team “sparsified” the SYK model, which means “pruning” the fermion interactions to reduce the complexity while still maintaining its crucial features. By combining sparsification and the TETRIS algorithm, the team was able to significantly reduce the circuit complexity, allowing it to be run on our machine with high fidelity.

They didn’t stop there. The team also proposed two new noise mitigation techniques, ensuring that they could run circuits deep enough without devolving entirely into noise. The two techniques both worked quite well, and the team was able to show that their algorithm, combined with the noise mitigation, performed significantly better and delivered more accurate results. The perfect agreement between the circuit results and the true theoretical results is a remarkable feat coming from a co-design effort between algorithms and hardware.

As we scale to larger systems, we come closer than ever to realizing quantum gravity in the lab, and thus, answering some of science’s biggest questions.

About Quantinuum

Quantinuum, the world’s largest integrated quantum company, pioneers powerful quantum computers and advanced software solutions. Quantinuum’s technology drives breakthroughs in materials discovery, cybersecurity, and next-gen quantum AI. With over 500 employees, including 370+ scientists and engineers, Quantinuum leads the quantum computing revolution across continents.

This month, Quantinuum welcomed its global user community to the first-ever Q-Net Connect, an annual forum designed to spark collaboration, share insights, and accelerate innovation across our full-stack quantum computing platforms. Over two days, users came together not only to learn from one another, but to build the relationships and momentum that we believe will help define the next chapter of quantum computing.

Q-Net Connect 2026 drew over 170 attendees from around the world to Denver, Colorado, including representatives from commercial enterprises and startups, academia and research institutions, and the public sector and non-profits - all users of Quantinuum systems.

The program was packed with inspiring keynotes, technical tracks, and customer presentations. Attendees heard from leaders at Quantinuum, as well as our partners at NVIDIA, JPMorganChase and BlueQubit; professors from the University of New Mexico, the University of Nottingham and Harvard University; national labs, including NIST, Oak Ridge National Laboratory, Sandia National Laboratories and Los Alamos National Laboratory; and other distinguished guests from across the global quantum ecosystem.

Congratulations to Q-Net Connect 2026 Award Recipients!

The mission of the Quantinuum Q-Net user community is to create a space for shared learning, collaboration and connection for those who adopt Quantinuum’s hardware, software and middleware platform. At this year’s Q-Net Connect, we awarded four organizations who made notable efforts to champion this effort.

- JPMorganChase received the ‘Guppy Adopter Award’ for their exemplary adoption of our quantum programming language, Guppy, in their research workflows.

- Phasecraft, a UK and US-based quantum algorithms startup, received the ‘Rising Star’ award for demonstrating exceptional early impact and advancing science using Quantinuum hardware, which they published in a December 2025 paper.

- Qedma, a quantum software startup, received the ‘Startup Partner Engagement’ award for their sustained engagement with Quantinuum platforms dating back to our first commercially deployed quantum computer, H1.

- Anna Dalmasso from the University of Nottingham received our ‘New Student Award’ for her impressive debut project on Quantinuum hardware and for delivering outstanding results as a new Q-Net student user.

Congratulations, again, and thank you to everyone who contributed to the success of the first Q-Net Connect!

Become a Q-Net Member

Q-Net offers year‑round support through user access, developer tools, documentation, trainings, webinars, and events. Members enjoy many exclusive benefits, including being the first to hear about exclusive content, publications and promotional offers.

By joining the community, you will be invited to exclusive gatherings to hear about the latest breakthroughs and connect with industry experts driving quantum innovation. Members also get access to Q‑Net Connect recordings and stay connected for future community updates.

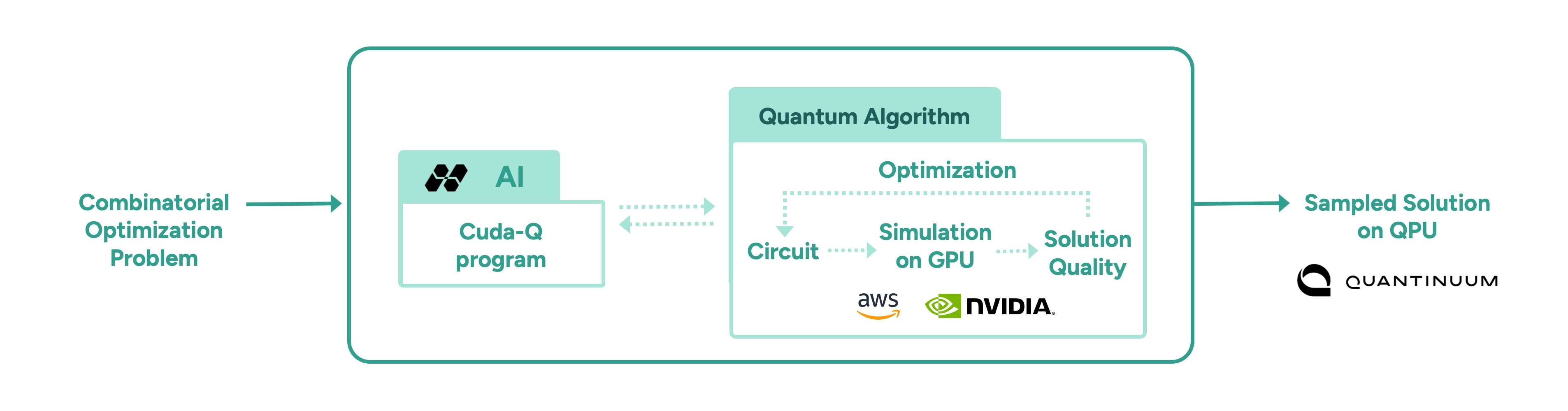

In a follow-up to our recent work with Hiverge using AI to discover algorithms for quantum chemistry, we’ve teamed up with Hiverge, Amazon Web Services (AWS) and NVIDIA to explore using AI to improve algorithms for combinatorial optimization.

With the rapid rise of Large Language Models (LLMs), people started asking “what if AI agents can serve as on-demand algorithm factories?” We have been working with Hiverge, an algorithm discovery company, AWS, and NVIDIA, to explore how LLMs can accelerate quantum computing research.

Hiverge – named for Hive, an AI that can develop algorithms – aims to make quantum algorithm design more accessible to researchers by translating high-level problem descriptions in mostly natural language into executable quantum circuits. The Hive takes the researcher’s initial sketch of an algorithm, as well as special constraints the researcher enumerates, and evolves it to a new algorithm that better meets the researcher’s needs. The output is expressed in terms of a familiar programming language, like Guppy or NVIDIA CUDA-Q, making it particularly easy to implement.

The AI is called a “Hive” because it is a collective of LLM agents, all of whom are editing the same codebase. In this work, the Hive was made up of LLM powerhouses such as Gemini, ChatGPT, Claude, Llama, as well as NVIDIA Nemotron, which was accessed through AWS’ Amazon Bedrock service. Many models are included because researchers know that diversity is a strength – just like a team of human researchers working in a group, a variety of perspectives often leads to the strongest result.

Once the LLMs are assembled, the Hive calls on them to do the work writing the desired algorithm; no new training is required. The algorithms are then executed and their ‘fitness’ (how well they solve the problem) is measured. Unfit programs do not survive, while the fittest ones evolve to the next generation. This process repeats, much like the evolutionary process of nature itself.

After evolution, the fittest algorithm is selected by the researchers and tested on other instances of the problem. This is a crucial step as the researchers want to understand how well it can generalize.

In this most recent work, the joint team explored how AI can assist in the discovery of heuristic quantum optimization algorithms, a class of algorithms aimed at improving efficiency across critical workstreams. These span challenges like optimal power grid dispatch and storage placement, arranging fuel inside nuclear reactors, and molecular design and reaction pathway optimization in drug, material, and chemical discovery—where solutions could translate into maximizing operational efficiency, dramatic reduction in costs, and rapid acceleration in innovation.

In other AI approaches, such as reinforcement learning, models are trained to solve a problem, but the resulting "algorithm" is effectively ‘hidden’ within a neural network. Here, the algorithm is written in Guppy or CUDA-Q (or Python), making it human-interpretable and easier to deploy on new problem instances.

This work leveraged the NVIDIA CUDA-Q platform, running on powerful NVIDIA GPUs made accessible by AWS. It’s state-of-the art accelerated computing was crucial; the research explored highly complex problems, challenges that lie at the edge of classical computing capacity. Before running anything on Quantinuum’s quantum computer, the researchers first used NVIDIA accelerated computing to simulate the quantum algorithms and assess their fitness. Once a promising algorithm is discovered, it could then be deployed on quantum hardware, creating an exciting new approach for scaling quantum algorithm design.

More broadly, this work points to one of many ways in which classical compute, AI, and quantum computing are most powerful in symbiosis. AI can be used to improve quantum, as demonstrated here, just as quantum can be used to extend AI. Looking ahead, we envision AI evolving programs that express a combination of algorithmic primitives, much like human mathematicians, such as Peter Shor and Lov Grover, have done. After all, both humans and AI can learn from each other.

As quantum computing power grows, so does the difficulty of error correction. Meeting that demand requires tight integration with high-performance classical computing, which is why we’ve partnered with NVIDIA to push the boundaries of real-time decoding performance.

Realizing the full power of quantum computing requires more than just qubits, it requires error rates low enough to run meaningful algorithms at scale. Physical qubits are sensitive to noise, which limits their capacity to handle calculations beyond a certain scale. To move beyond these limits, physical qubits must be combined into logical qubits, with errors continuously detected and corrected in real time before they can propagate and corrupt the calculation. This approach, known as fault tolerance, is a foundational requirement for any quantum computer intended to solve problems of real-world significance.

Part of the challenge of fault tolerance is the computational complexity of correcting errors in real time. Doing so involves sending the error syndrome data to a classical co-processor, solving a complex mathematical problem on that processor, then sending the resulting correction back to the quantum processor - all fast enough that it doesn’t slow down the quantum computation. For this reason, Quantum Error Correction (QEC) is currently one of the most demanding use-cases for tight coupling between classical and quantum computing.

Given the difficulty of the task, we have partnered with NVIDIA, leaders in accelerated computing. With the help of NVIDIA’s ultra-fast GPUs (and the GPU-accelerated BP-OSD decoder developed by NVIDIA as part of NVIDIA CUDA-Q QEC library), we were able to demonstrate real-time decoding of Helios’ qubits, all in a system that can be connected directly to our quantum processors using NVIDIA NVQLink.

While real-time decoding has been demonstrated before (notably, by our own scientists in this study), previous demonstrations were limited in their scalability and complexity.

In this demonstration, we used Brings’ code, a high-rate code that is possible with our all-to-all connectivity, to encode our physical qubits into noise-resilient logical qubits. Once we had them encoded, we ran gates as well as let them idle to see if we could catch and correct errors quickly and efficiently. We submitted the circuits via both NVIDIA CUDA-Q as well as our own Guppy language, underlining our commitment to accessible, ecosystem-friendly quantum computing.

The results were excellent: we were able to perform low-latency decoding that returned results in the time we needed, even for the faster clock cycles that we expect in future generation machines.

A key part of the achievement here is that we performed something called “correlated” decoding. In correlated decoding, you offload work that would normally be performed on the QPU onto the classical decoder. This is because, in ‘standard’ decoding, as you improve your error correction capabilities, it takes more and more time on the QPU. Correlated decoding elides this cost, saving QPU time for the tasks that only the quantum computer can do.

Stay tuned for our forthcoming paper with all the details.